Why structured data is no longer just a technical problem — it is a liability question.

AI visibility is becoming the new SEO. Companies pour resources into structured data, knowledge graphs and entity relationships. All to be understood, surfaced and trusted by AI systems.

But there is a blind spot in almost every conversation about this topic. While the industry obsesses over how to structure information, almost nobody asks: who is responsible for what AI understands from it?

That question has consequences. Legal ones.

From Content to Meaning Infrastructure

Traditional digital communication was straightforward: you wrote text, users interpreted it. The relationship was human-to-human, mediated by a screen. That era is ending. We are now structuring meaning itself. And that is a fundamentally different act.

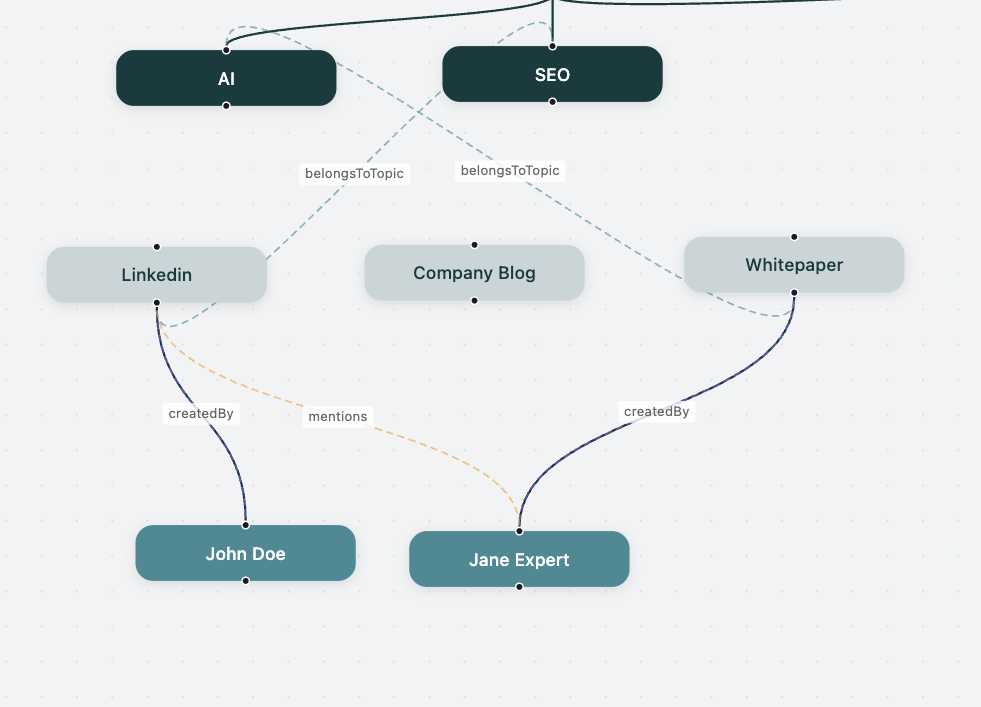

Semantic graphs do not simply describe information. They encode:

- relationships between entities

- hierarchies of authority and relevance

- implied claims about expertise and trust

These are not neutral technical decisions. They are assertions which carry weight in ways their authors rarely anticipate.

The Hidden Risk: Interpretation at Scale

When you publish structured data, you give up something most people do not realize they had: control over the outcome.

Your structured data enables:

- AI systems to infer relationships you never explicitly stated

- knowledge graphs to connect your information with external sources

- automated systems to generate conclusions based on your structure

The result is what we call Interpretation Risk. A risk that does not arise because your data is wrong, but because its meaning is constructed externally, by systems you do not control.

Your data may be accurate. The conclusions drawn from it may not be and the gap between the two is where liability lives.

When Structure Becomes Narrative

Consider a seemingly simple set of decisions:

- You link an expert’s name to a topic.

- You associate a product with a health claim.

- You define a hierarchy between two concepts.

To a human reader, these are contextual signals, easily understood as partial or qualified. To an AI system processing structured data, they become authority signals, expertise attributions and relevance weightings.

The structure you built for technical visibility becomes, unintentionally, your brand narrative. And unlike a press release you can retract, structured data, once published, is out of your hands.

Knowledge Graph as Authority Signals for your Company

The Legal Blind Spot

Current legal frameworks were not designed for this reality. They assume content is authored, meaning is explicit and responsibility is traceable to a human decision.

In semantic systems, none of that holds. Meaning is inferred, relationships are interpreted and outputs are generated dynamically, often by systems operating far beyond the original publisher’s awareness.

The result is a structural gap: there is no clear legal layer governing AI interpretation of structured data. Responsibility is diffuse, liability is unassigned and risk accumulates quietly, until something goes wrong.

Introducing the Legal Layer of AI Visibility

Operating safely in this environment requires more than good technical infrastructure or careful semantic modeling. It requires a new category of thinking entirely.

We call it the Legal Layer of AI Visibility. It is the governance framework that sits beneath your structured data and defines:

- what your data represents and what it explicitly does not

- who bears responsibility for how it is interpreted

- how risk is allocated when AI systems generate outputs from your structure

This layer is not optional. It is the difference between publishing with intention and publishing with exposure.

What This Looks Like in Practice

At planeed, we have identified three components that every organization needs to address:

- Semantic Responsibility: Before publishing any structured data, organizations must validate their entity relationships, topic connections and implied claims. What looks like a technical mapping may function as a public assertion. Treat it accordingly.

- Controlled Publication: Once data is published, it can be reused, remixed and reinterpreted by systems you will never see. There is no “take back”. Publication must be treated as a permanent act with permanent consequences and scoped accordingly.

- Explicit Risk Framing: Organizations need clear, documented positions on what they do not guarantee: the accuracy of AI interpretations, control over derived outputs and liability for meaning constructed by third-party systems. Silence on these points is not neutrality — it is exposure.

Why This Matters Now

We are at the early stages of a shift whose full implications are not yet visible. The trajectory, however, is clear:

- Semantic data is gaining importance.

- Content is becoming structured meaning.

- Visibility is becoming machine interpretation.

The companies that succeed in this environment will not simply be the ones who structure their data best. They will be the ones who understand — and actively manage — the consequences of that structure.

Just as privacy gave rise to GDPR and security standards gave rise to ISO frameworks, AI visibility will generate its own standards for semantic responsibility. This is not regulation yet. But it will be.

The Question That Changes Everything

The industry has spent the last few years asking: “How do we become visible in AI?”

That question, while valid, is incomplete. The more important question — the one that will define legal exposure, brand integrity and long-term trust — is this: What are we actually making visible? And who controls its meaning?

That is the legal layer of AI visibility. And it is time to start building it.

Related Posts

Related Posts

The New Marketing Layer No One Is Managing Yet Right now, we are in a transition phase. Many companies still focus almost …

Why Your Marketing Isn’t Creating Lasting Authority – And What You Can Do About It Most organizations are investing heavily in content, …

The End of the Website-Centric Internet For a long time, a company’s digital strategy was relatively simple: Build a website, drive traffic …